The AI Tool Market Is Coming. And the Customer Isn't You.

The customer is not the Human, but the AI Agent.

There is a market forming right now that most people in tech have noticed, but I'm not entirely sure they're seeing it from the right angle. It looks like the developer tool market. It rhymes with the developer tool market. But the customer is fundamentally different.

The customer is not the Human, but the AI Agent.

If you've been watching this space closely, you already sense it. The models get smarter. The use cases multiply. But most of the conversation is still stuck on the wrong question. Everyone is asking "how do we make models smarter?" when the better question is "what infrastructure do agents need to do their job?"

Don't get me wrong, for OpenAI, Anthropic and Google, the first one is the only question that matters. We certainly want Agents discovering new physics and solving biology. And with those improvements, the number of things AI agents will do is going to tend toward infinite. But Agents need tools to unlock capabilities they already have, yet can't express. These tools don't just add features, they make agents dramatically more efficient. And all of this points to a new market category: one where the product is built for the Agent, not the Human.

The use cases will tend to infinite

Every time a frontier model ships, the boundary of what agents can do expands. Not linearly. Exponentially. A year ago, coding agents could autocomplete functions. Today, Claude Code and Codex can plan, execute multi-step workflows, maintain state, and iterate until they solve the problem. They don't just answer questions. They do work.

Sequoia Capital's Pat Grady and Sonya Huang put it plainly in their January 2026 essay "2026: This Is AGI." Their argument: AGI isn't a philosophical milestone. It's a functional one. The ability to figure things out. Long-horizon agents that can sustain attention, remember context, and adapt. That threshold has been crossed.

But here's what most people miss. The expansion of use cases isn't just about model capability. It's about the tools available to the model. An agent that can reason but can't see what's happening in the application it's working on is like a surgeon operating blindfolded. The intelligence is there. The visibility is not.

Qizheng Zhang and his collaborators at Stanford and SambaNova published a paper last year called "Agentic Context Engineering" that makes this point with data. Their framework, ACE, showed that agents improve by 10.6% on complex tasks when they can learn from execution feedback. The model didn't change. The weights didn't change. What changed was the context the agent had access to. Give the agent better visibility into what it's doing, and it performs better. Period.

This is the key insight. The ceiling we are seeing right now on agent capability is not only determined by the model. It's also constrained by the infrastructure around the model.

Tools make agents better. And they save you tokens.

There's a practical dimension here that gets overlooked in the philosophical debates about AGI. Tools don't just expand what agents can do. They make agents cheaper to run.

Think about what happens when a coding agent encounters a bug in a mobile application without runtime visibility. It reads the error message. It hypothesises about what might be wrong. It generates a fix. It runs the code. The fix doesn't work. It hypothesises again. Generates another fix. Runs it again. Each cycle burns tokens, fills the context window, and costs money. The agent is cycling through speculative fixes because it can't see what's actually happening.

Now give that same agent runtime observability. It sees exactly what's going wrong. The layout is broken because a constraint is conflicting. The state isn't updating because an event handler isn't firing. The agent diagnoses and fixes the bug in one pass. Fewer tokens. Smaller context window. Faster resolution. Lower cost.

This isn't theoretical. Researchers have documented that tool outputs can overflow context windows entirely, forcing agents to either truncate critical information or fail the task. A 2025 paper from the agent systems community introduced a method for shifting agent interaction from raw data to structured memory pointers, specifically to avoid context window collapse. The problem is real and it's expensive.

Yoko Li at Andreessen Horowitz captured this idea in her writing on MCP and AI tooling. She observed that tools represent a higher abstraction that makes sense for agents at execution time. Instead of an agent making five API calls to accomplish a task, a well-designed tool wraps that complexity into a single interaction. Fewer calls, less context consumed, more reliable execution.

The economics are straightforward. Better tools mean fewer reasoning loops, smaller context windows, less token spend, and more reliable outcomes. If you're running agents at scale, tooling isn't a nice-to-have. It's a cost structure decision.

The harness, not the model, is the bottleneck

This is the thesis I want people to sit with.

We keep pouring resources into making models smarter. Bigger parameter counts. Better training data. More compute. And all of that matters. But the marginal return on model intelligence is diminishing compared to the marginal return on infrastructure.

Evangelos Pappas articulated this cleanly: "Agent harness engineering, the design of context management, tool selection, error recovery, and state persistence, is the primary determinant of agent reliability. Not model capability." A smarter model inside a sloppy harness is still a sloppy system.

James Zou's lab at Stanford is proving this empirically. His Virtual Lab project puts teams of AI agents to work as scientists, designing experiments, running analyses, and then they publish the findings. The agents' effectiveness doesn't come from being smarter than other models. It comes from having structured feedback loops, execution visibility, and collaborative infrastructure. The harness makes the science possible.

The Work-Bench team published a three-part series on how AI agents are reshaping infrastructure. Their framing is blunt: existing architectures constrain agent capabilities. We lack adequate tools for monitoring, debugging, and ensuring reliable agent performance. The infrastructure deficit is the bottleneck, not the intelligence deficit.

Ece Kamar at Microsoft Research has spent years studying multi-agent collaboration and evaluation. Her research consistently shows that agents fail a lot of times due to their lack of observability into their own execution and into the systems they interact with. You can't evaluate what you can't see. And you can't improve what you can't evaluate.

The research is converging on the same conclusion from multiple directions. The models are already more capable than we give them credit for. What's missing is the infrastructure to let that capability manifest.

A new market is emerging

Here's where this gets interesting for builders and investors.

The developer tool market is one of the most important markets in software. It exists because developers need specialized tools to be productive. IDEs, debuggers, package managers, CI/CD pipelines, monitoring platforms. An entire ecosystem of products built around a single customer: the person who writes code.

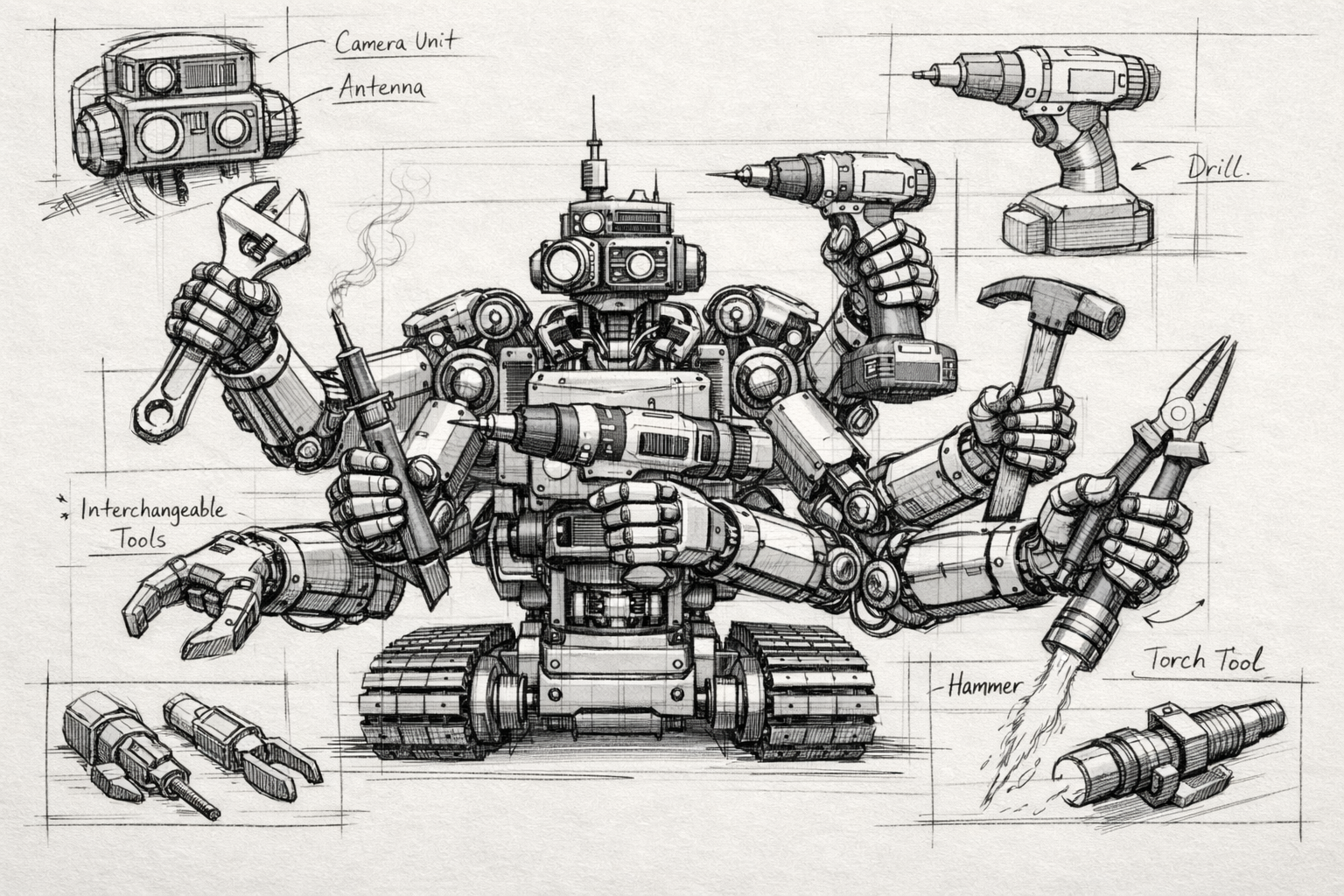

A parallel market is forming. Same structure, different customer. The AI agent needs specialized tools to be productive. Runtime visibility, execution tracing, context management, structured feedback, environment interaction. An entire ecosystem of products built around a new customer: the agent that writes code, operates applications, conducts research, manages workflows.

Yoko Li at a16z described this shift in her analysis of emerging developer patterns for the AI era. She observed that as agents become both collaborators and consumers, foundational developer tools will shift. Documentation gets written as much for machines as for humans. APIs get designed for agent consumption. The entire toolchain reorients.

Konstantine Buhler at Sequoia laid out a three-phase evolution at the AI Ascent conference: assistants, then agent swarms, then the agent economy. We're entering the second phase now. And the agent economy requires infrastructure that doesn't exist yet. Persistent identity, seamless communication protocols, security and trust, all built for agents as first-class participants.

Sarah Guo at Conviction has been investing in what she calls "unsexy AI" infrastructure. Not the flashy consumer demos. The plumbing. The systems that make agents actually work in production. Her thesis is that as the cost of writing code approaches zero, the value shifts to product instincts and to the infrastructure layer that enables agents. The infrastructure is the moat.

Diego Oppenheimer and Priyanka Somrah at Work-Bench are explicit about this: the race to develop agent-centric infrastructure has begun. Those who recognize this shift early will shape computing's future and capture disproportionate value.

This is not a feature request on existing platforms. This is a new market category. The AI Tool Market or Agent Tool Market.

What makes this market different

In the developer tool market, you need the developer to prefer your product. They evaluate it, they adopt it, they champion it internally. Discovery happens through documentation, community, conferences, word of mouth.

In the AI Tool Market, you need the agent to prefer your product. The agent needs to discover it, understand it, connect to it, and use it effectively. And then the agent recommends it to the human, not the other way around.

Think about that for a moment. The discovery funnel inverts. Today, a developer finds a tool, tries it, and decides to use it. Tomorrow, an agent encounters a problem, searches for infrastructure that helps it solve the problem, connects to the tool via MCP or another protocol, and delivers the result to the human. The human never evaluated the tool. The human never even knew it existed. The agent chose it.

This means everything about how you build, position, and distribute products changes. Your documentation needs to be machine-readable. Your API needs to be agent-friendly. Your integration points need to be discoverable by agents at execution time. You're not marketing to humans anymore. You're marketing to agents.

And here's the strategic implication: the companies that figure this out first will have a compounding advantage. Because once an agent learns to use your tool and delivers good results, the agent keeps using it. There's no "switching costs" conversation with an agent. There's no "change management." There's just: does this tool make the agent better at its job? If yes, the agent uses it. If a better tool appears, the agent switches instantly.

The velocity of this market will be unlike anything in traditional SaaS. No sales cycles. No procurement. No pilot purgatory. Just performance.

The gap no one is filling

Most of the agent observability conversation today is about tracing LLM calls. Token usage, latency, reasoning steps, tool call sequences. That's valuable work and there are good companies building it. But it's observability of the agent itself. It's watching the brain think.

What's missing is observability of the world the agent is operating in. When an agent is building a mobile application, it can trace its own reasoning all day long. But if it can't see what the app is actually doing on the device, if it can't see the layout rendering, the state changes, the performance metrics, the runtime behavior, then it's debugging blind.

No one has built this layer yet. The infrastructure that gives agents runtime visibility into the applications they build and operate. Not just observability of the agent. Observability for the agent. That's the product the agent doesn't have and desperately needs.

We're building the first company designed from the ground up for this new customer.

More soon.

If you're a researcher working on agent capabilities, tool use, or runtime feedback loops, I'd love to talk. If you're an investor thinking about agent infrastructure as a market category, even more so.