Incentive Vectors' Superalignment

Why the law-making process can't match society's needs.

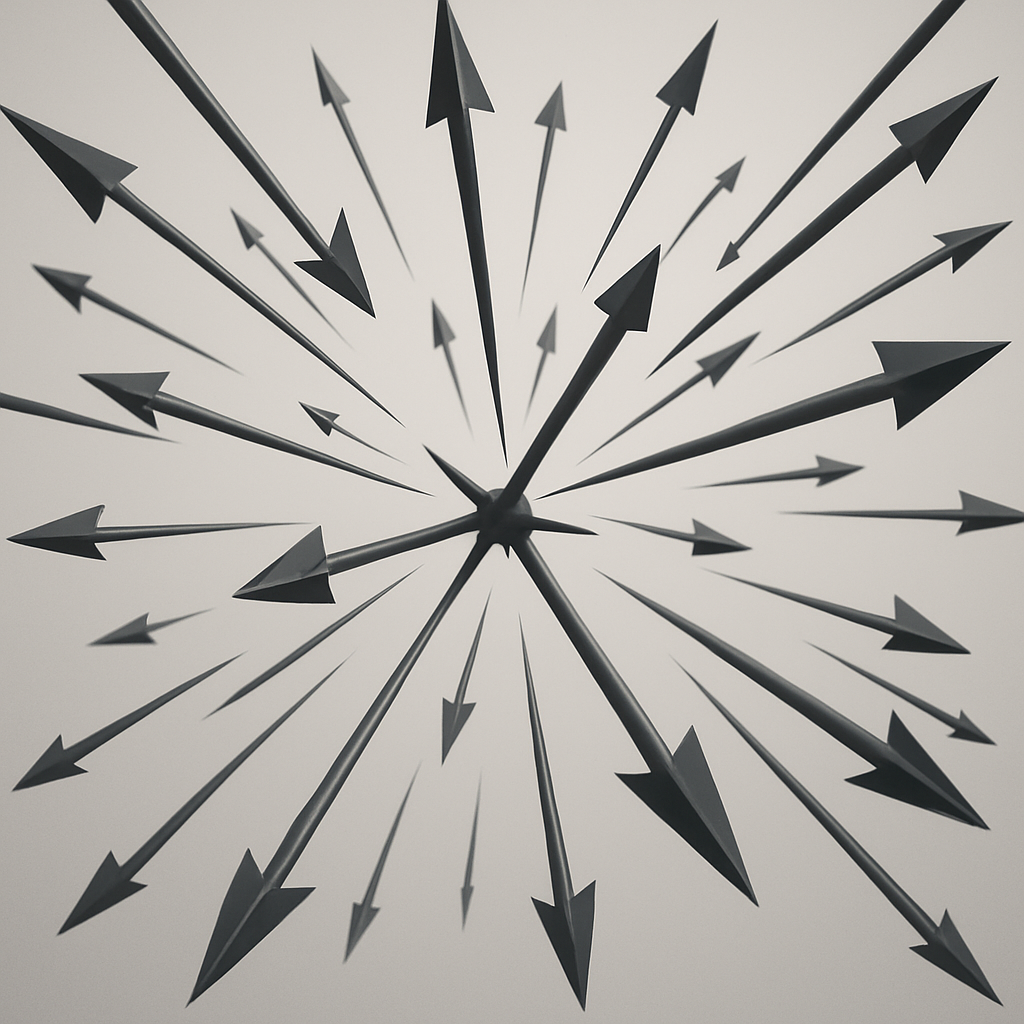

We usually talk about laws and regulations in moral or political language. A law is “fair” or “unfair”, “pro business” or “anti worker”, “tough on crime” or “soft on crime”. Those labels are emotionally satisfying, but they do not help much when you are trying to understand how an entire system behaves over time. A more useful way to think about regulation borrows a very simple idea from physics: the idea of a vector.

A vector is one of the most basic objects in physics and geometry. It has two features and nothing more: a direction and a magnitude. You can imagine yourself standing on a small boat in a lake. One friend pulls the boat toward the north. Another friend pulls toward the east. Each pull has a direction and a strength, so each pull is a vector. When both friends pull at the same time, the boat does not move purely north or purely east. It drifts in a diagonal, somewhere between those two directions. That diagonal movement is the resultant vector, the single direction and speed that emerges when you add both pushes together, taking their directions and strengths into account.

This mental model is powerful because it shows something that is easy to forget in politics. What actually happens is not determined by one isolated force but by the sum of many small forces acting together. You can have many pushes, all with clear intentions, and still end up drifting somewhere no one planned to go. That is exactly what happens with laws and regulations.

Every regulation is, in effect, an incentive vector. It points in some direction by rewarding or penalising certain behaviours, and it has a magnitude determined by how strong those rewards or penalties are, how strict the enforcement is, and how hard it is to comply. A subsidy for renewable energy is a vector that points capital and talent toward green projects. Strict zoning rules that make it very hard to build new housing are vectors that push developers away from starting projects or toward hunting for loopholes instead of building straightforwardly. Medical safety regulations are vectors that push pharmaceutical companies toward longer trials, more documentation, and more caution, which improves safety but also slows innovation. Tax rules, liability rules, reporting requirements, licence regimes: each of them is a little arrow in some direction in the space of possible behaviours.

Crucially, those arrows do not look the same for everyone. The same regulation can create very different vectors depending on who you are and what you do. A new environmental rule might be a small nudge for a large incumbent with a legal department and a compliance team, but a huge shove for a small startup that now has to hire consultants and lawyers before it can even get to market. A licensing requirement might be a gentle tap on a well capitalised bank and a brick wall for a community lender. To a gig worker, a change in employment law can be the difference between flexibility and precarity. To a salaried employee in a big firm, the same rule can barely register. In physics language, we do not just have one uniform vector field, we have something that varies by position. Where you are in the economy, which industry you work in, and what resources you have all change the direction and magnitude of the regulatory vectors that act on you.

If a state only had a handful of regulations, all written at the same moment by a single mind, it might be possible to keep this picture in your head. Modern societies, however, are governed by thousands upon thousands of rules, written over many decades by different agencies and parliaments, under different political pressures, reacting to crises that have long since passed. Each one introduces another vector into the system, and each one has different effects for different actors. The overall behaviour of the economy and of society is the tangled resultant of all these heterogeneous pushes.

This is where the problem starts. Most governments say they aim at broadly similar goals: prosperity, safety, fairness, innovation, and sustainability. If those goals were the only thing that mattered and if policy were designed as a coherent whole, you would want the sum of all regulatory vectors, across all sectors and social groups, to point roughly in that direction. Instead, because rules are layered gradually and often reactively, you get a messy field of arrows pointing everywhere at once.

One cluster of rules encourages competition. Another quietly protects large incumbents by burying small newcomers in compliance costs. Planning rules and housing subsidies talk about affordability while, in combination, limiting the supply of new homes and making prices rise. Environmental standards are introduced with one logic, then undercut by subsidies or exemptions designed for short term political relief. Financial regulations do their best to suppress visible forms of risk, even as they push risk taking into less visible corners of the system. A rule that looks like a gentle alignment tweak for a multinational can land as a knockout punch for a two person startup. Each piece may be defensible in isolation. Together, and experienced very differently by different players, they produce a resultant vector that often points toward outcomes no one would have defended openly: systems that favour lobbying over innovation, short term appearances over long term resilience, stagnation in essential goods like housing, and complex mazes that only well lawyered incumbents can afford to navigate.

This gap between what people say they want and what the incentives actually produce is what I am calling a superalignment problem. Incentive Vectors’ Superalignment is not a question of whether any single law has good intentions. It is a question about the alignment of the resultant: once you add up all the uneven, actor specific pushes, are we moving in a direction that is genuinely good for society, or have we drifted into a weird diagonal no one meant to choose?

If that sounds abstract, it helps to look at a real attempt to make policy more empirical and more behaviourally grounded. In the United Kingdom, during David Cameron’s government, a small team was created inside the Cabinet Office: the Behavioural Insights Team, quickly nicknamed the Nudge Unit. Its mission was modest but radical in method. Instead of assuming people behave like rational calculators who carefully read every law and optimise, the team started from the messy reality of human psychology. They rewrote tax letters in different ways to see which wording actually led to more people paying on time. They experimented with the order of choices in forms to see which designs led to better outcomes, such as higher savings rates or more accurate self reporting. They ran controlled trials, compared different versions, measured what people actually did, and then recommended changes to policy based on evidence rather than theory alone.

What the Behavioural Insights Team was really doing, in the language of vectors, was experimentally tweaking small incentive vectors and watching how the resulting behaviour shifted. Change the message, and you slightly rotate the vector that a tax rule creates. See more compliance, and you know you have moved closer to your intended direction. See unintended side effects, and you learn that the vector had strange consequences you did not anticipate. The important part here is not just nudging in the pop psychology sense. It is the existence of a laboratory mindset inside government: a place where policy is treated as a hypothesis about how people will behave and then tested against reality.

A natural extension of this idea is that every government should have behavioural labs, or several of them, tasked not just with tweaking letters but with understanding the broader incentive landscape. Their job would be to study how people, companies, and institutions in different sectors actually respond to regulations, and to map the second order effects. A rule aimed at financial safety might reduce visible risk on large bank balance sheets while pushing leverage into small, poorly supervised entities. A subsidy aimed at helping a particular industry might, over time, entrench a narrow set of technologies and make it harder for new, better approaches to emerge. Without behavioural feedback, policymakers see only the stated purpose, not the real pattern of vectors that different groups experience.

Alongside this, regulations themselves need to be written in a way that allows for learning. Many legal systems lean toward very rigid, highly prescriptive rules, with precise thresholds, fixed processes, and detailed instructions that leave little room for interpretation. This gives a feeling of clarity but also freezes the incentive vector at a particular angle and magnitude, even as the world around it changes and as its impact diverges between actors. A more promising approach treats laws as defining direction, not exact choreography. The statute specifies the outcome space we care about: safer roads, more resilient banks, broader access to housing, lower emissions, trustworthy AI systems. The authorities responsible for implementing and enforcing those rules are then given the latitude to adjust how they get there, through guidance, enforcement priorities, targeted experiments and tailored approaches for different types of actors, as long as they can justify those adjustments in terms of the goals the law set.

In other words, the law says where the vector should roughly point. Regulators then fine tune its exact angle and strength, and sometimes differentiate how it is applied to different types of entities, in response to evidence about how people actually behave. That means asking a different question in day to day work. Instead of asking only whether the law has been applied in a formally correct way, officials ask whether the way it is being applied, in each context, is pushing behaviour toward the outcomes the law was written to achieve. When the answer is no, they have the authority, within the principles set by parliament, to recalibrate.

This is very close to the mindset used in software development and tech startups. Software teams do not expect that version 1 of a product will fit users perfectly. They ship something small, watch how people use it, learn where it breaks or confuses or frustrates, then iterate. Features are added or removed, interfaces are refined, performance is improved through repeated profiling and optimisation. Everything is treated as provisional and open to improvement as new information comes in. The important thing is not that the first draft is perfect, but that the feedback loop is fast and honest.

If we applied this logic to regulation, the process would look quite different from traditional lawmaking. You would still start by defining clear goals, but you would acknowledge that you do not yet know the best combination of incentives that will move behaviour toward those goals for very different actors. You would map the existing rules that already shape behaviour, and you would pay attention to how their vectors vary between big and small firms, between sectors, and between individuals in different circumstances. You would then introduce changes in ways that allow measurement, such as pilots in particular regions or industries, regulatory sandboxes where rules are relaxed under supervision, and small scale experiments rather than instant nationwide shifts. You would measure not only whether people ticked new boxes or filed new forms, but whether underlying behaviours and outcomes actually changed in the intended direction for the groups you care about. Then you would strengthen the vectors that clearly help, weaken or repeal those that do not, and simplify where overlapping rules create perverse incentives or uneven burdens.

Once you accept this, superalignment becomes a design problem rather than a purely ideological fight. The question is no longer just “Is this law just?” or “Does this policy align with my values?” Those are vital questions, but they are not enough. You also have to ask “When this law interacts with all the other laws already in place, and when you look at its effect on different people and industries, what does it do to the resultant? Does it rotate the overall direction of our incentive system toward long term human flourishing, or push it away, and for whom?”

Most societies do not ask that last question explicitly. They accumulate vectors and hope for the best. The result is predictable drift. To correct it, we need institutions that can see the whole field of incentives clearly, including how it feels from different positions in the economy, experiment with adjustments, and iterate like engineers rather than legislate once and forget. Behavioural labs, principle based laws, and an iterative approach to regulation are tools for that job.

In the end, societies move not according to what their laws are called or what speeches are given about them, but according to the incentives embedded in those laws and the way they interact in real lives and real businesses. Thinking in terms of vectors makes that reality harder to ignore. If we care about where we are going, we have to care about the resultant. Incentive Vectors’ Superalignment is simply the name for getting that resultant, across all those different trajectories, to point somewhere we would actually choose if we saw it clearly.